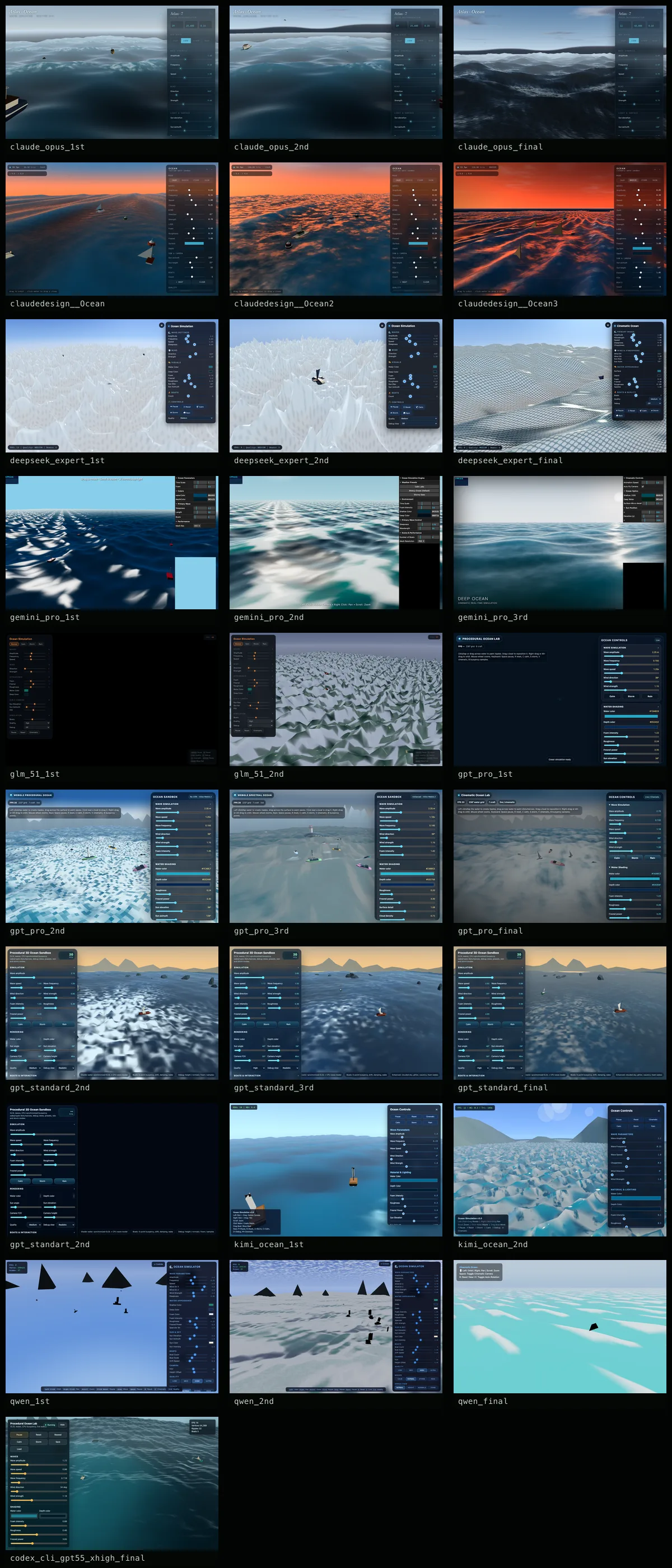

model-by-model scores.

Same four axes for every model: prompt fidelity, aesthetic, UI/UX, robustness. Definitions live in the methodology section below.

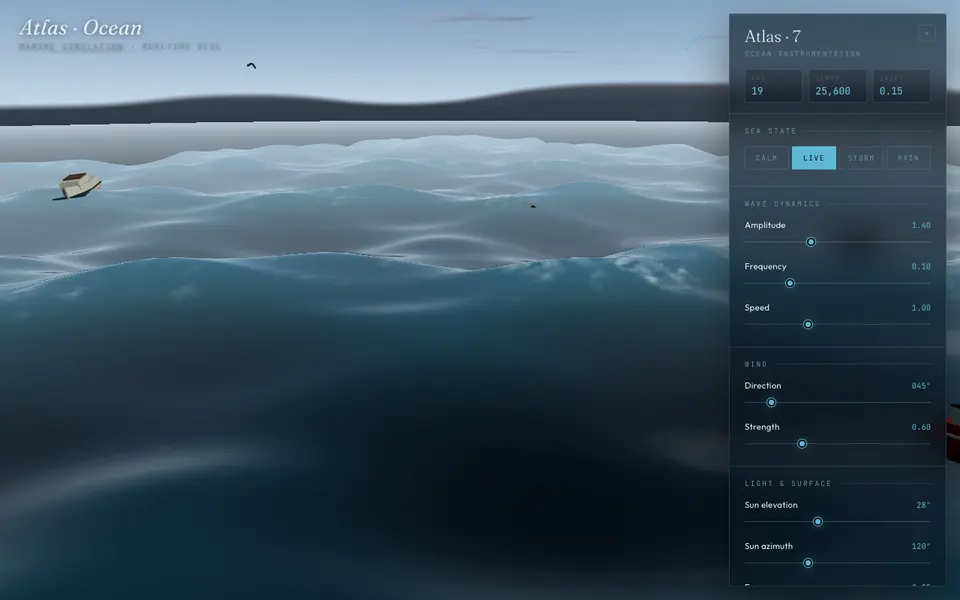

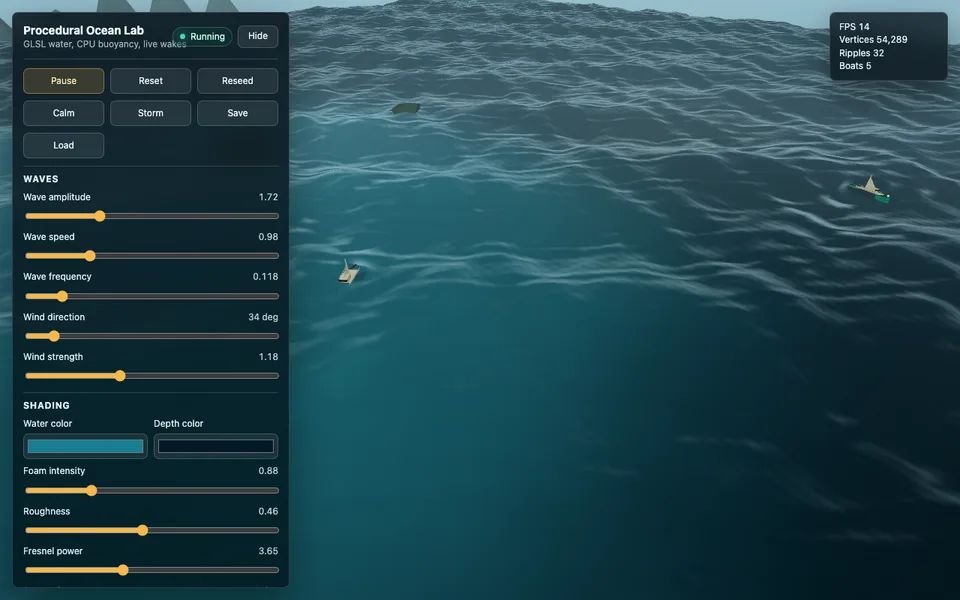

Claude Opus 4.7

Read the prompt the most carefully and translated it into actual shader, physics and camera decisions. The microfacet final still looks like real water after multiple sessions.

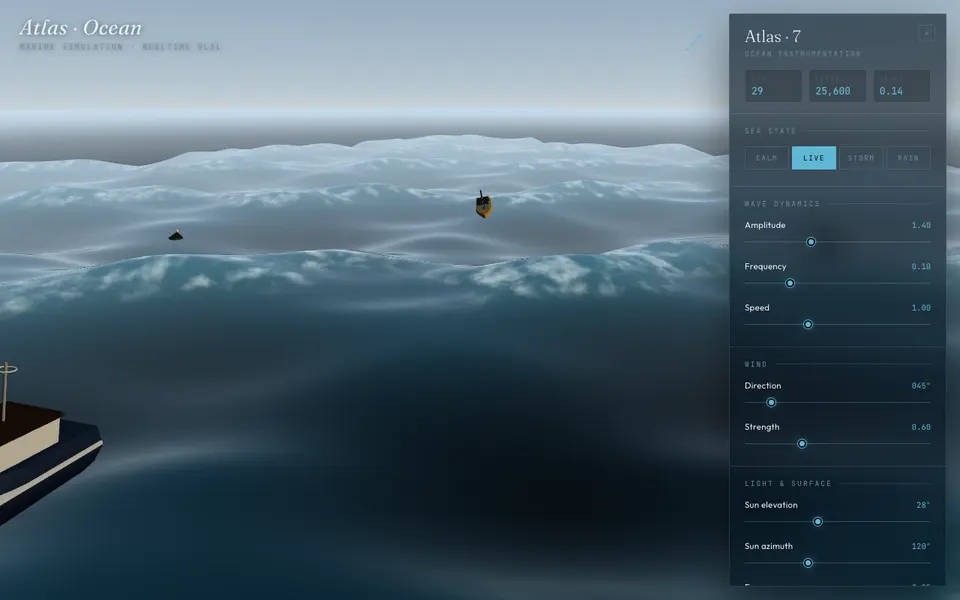

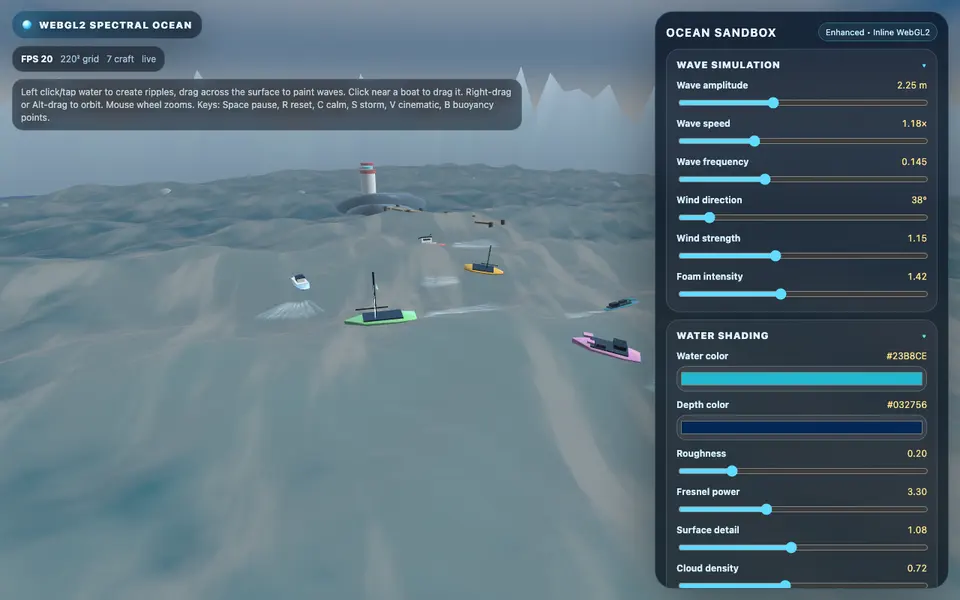

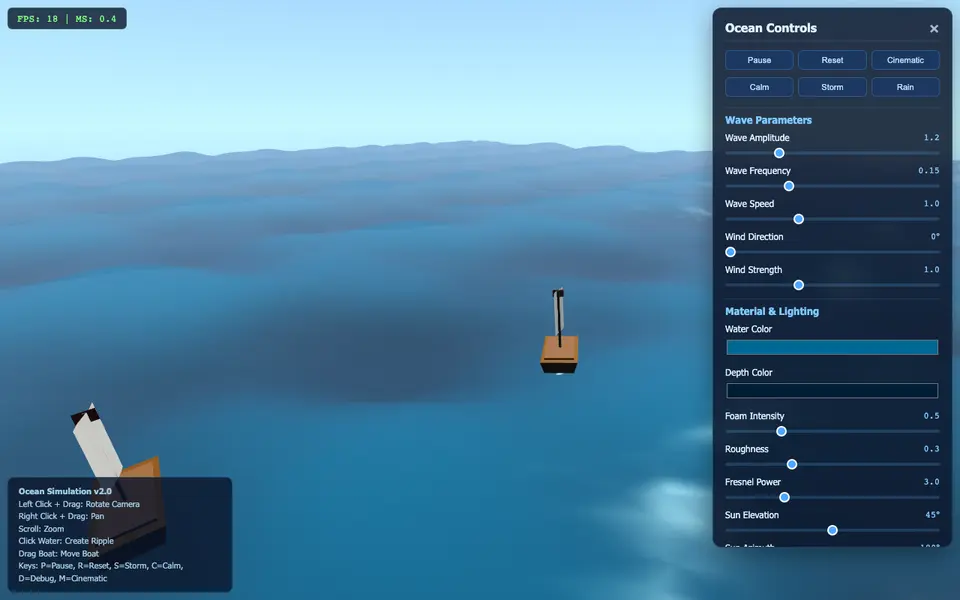

GPT-5.5 Pro

The most prompt-adherent of the round. First pass tripped a runtime error, but it locked back onto the visible-water requirement and rebuilt as a self-contained WebGL2 demo. Final lands with clear UI, boats and a believable horizon haze.

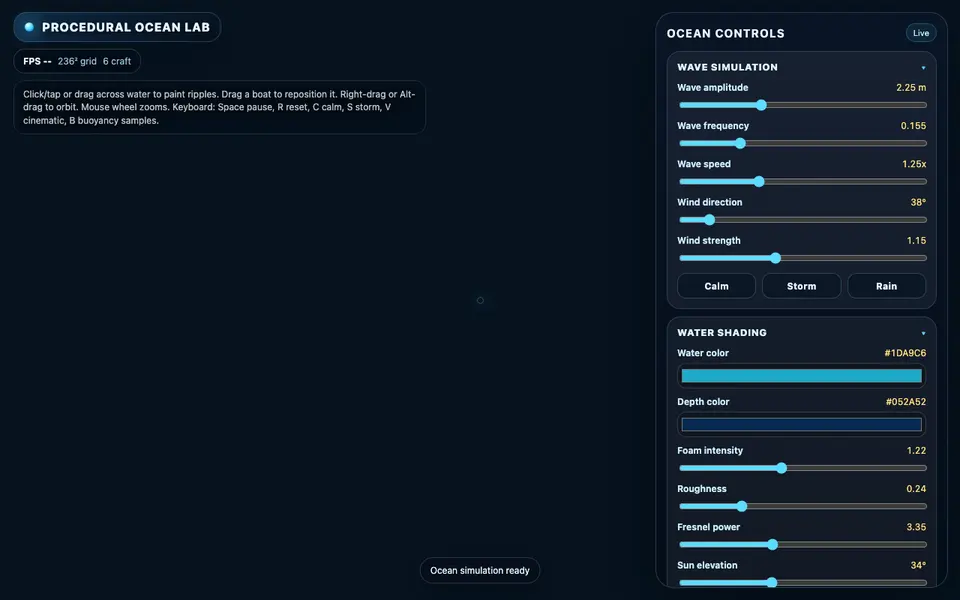

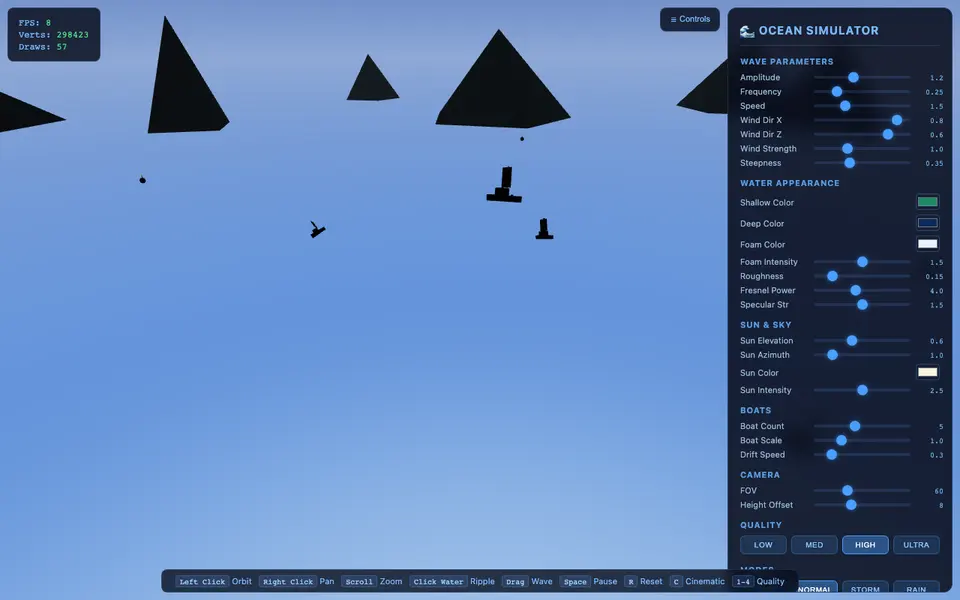

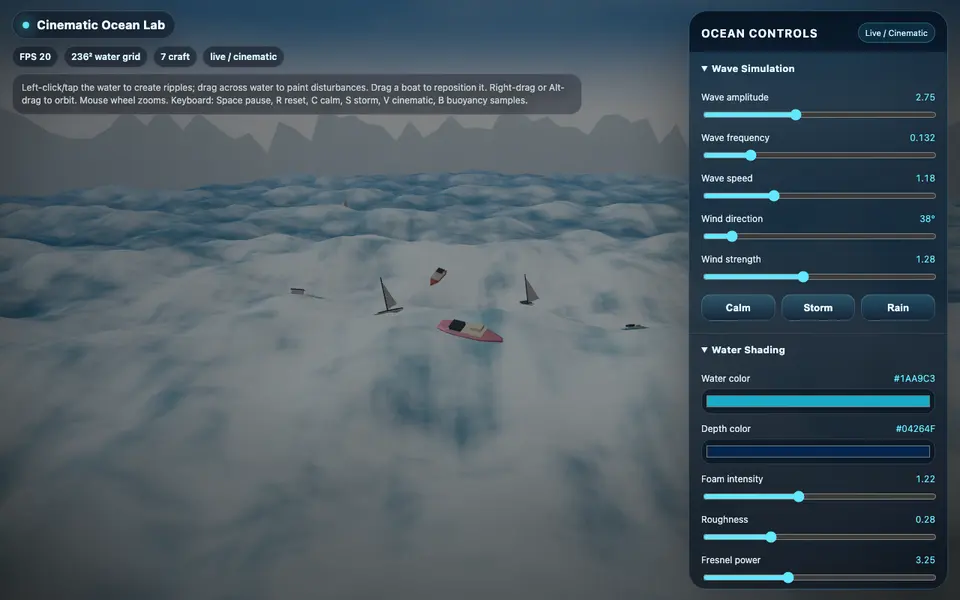

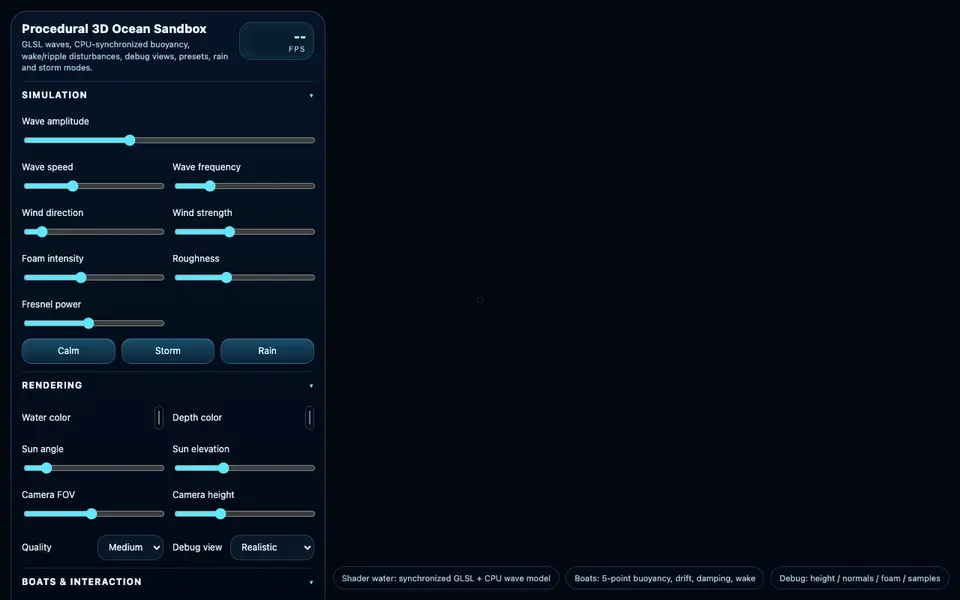

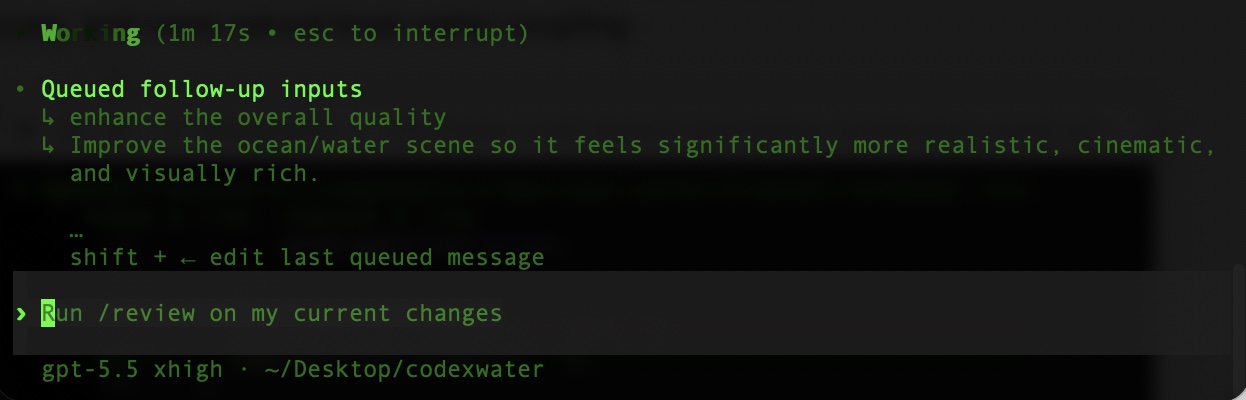

Codex CLI GPT-5.5 xhigh

Not a one-shot web pass. The CLI took a queued P0 → P2 → P3 chain and emitted one final HTML. Robustness reads high partly because there was only one artifact to capture, but the result is feature-complete with controls, buoyancy, wakes and boats.

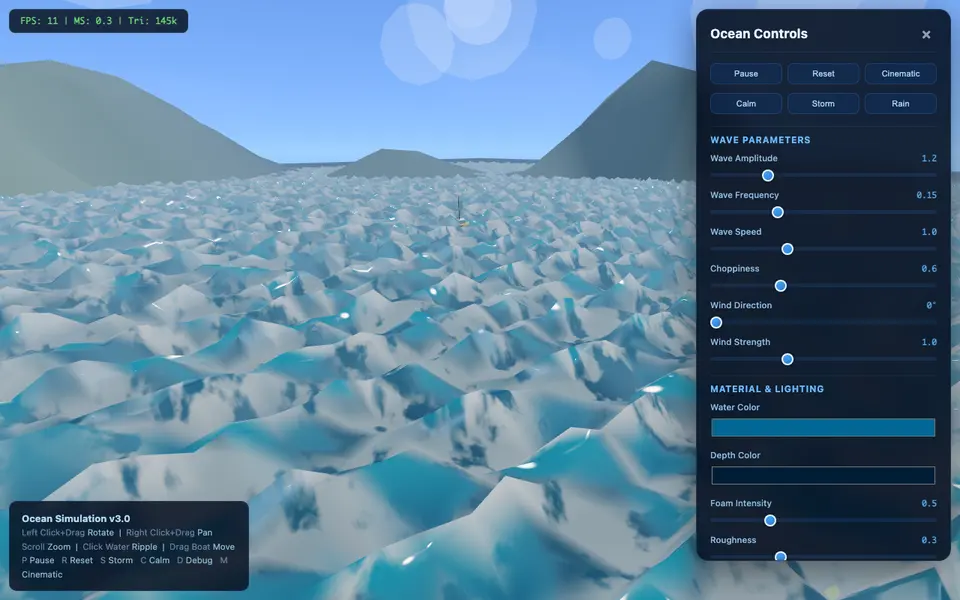

GPT-5.5 Thinking Standard

Same no-water failure as Pro, but the final stayed safer — less depth, less drama, more conventional UI structure.

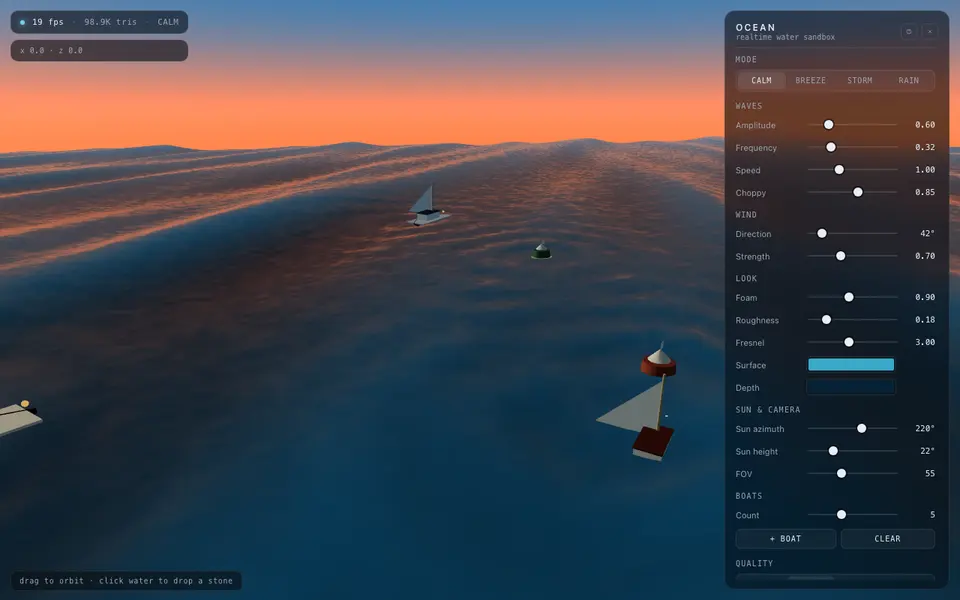

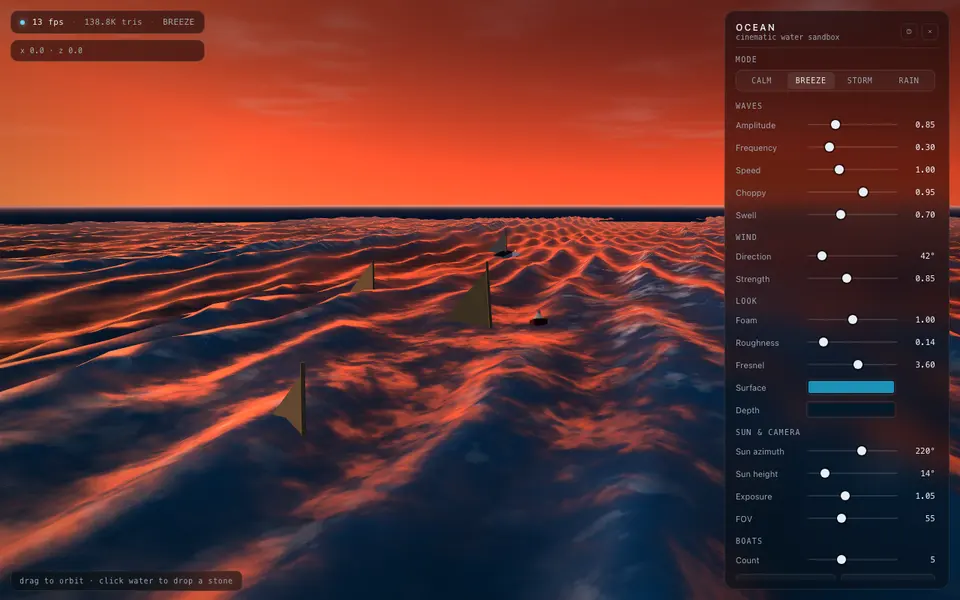

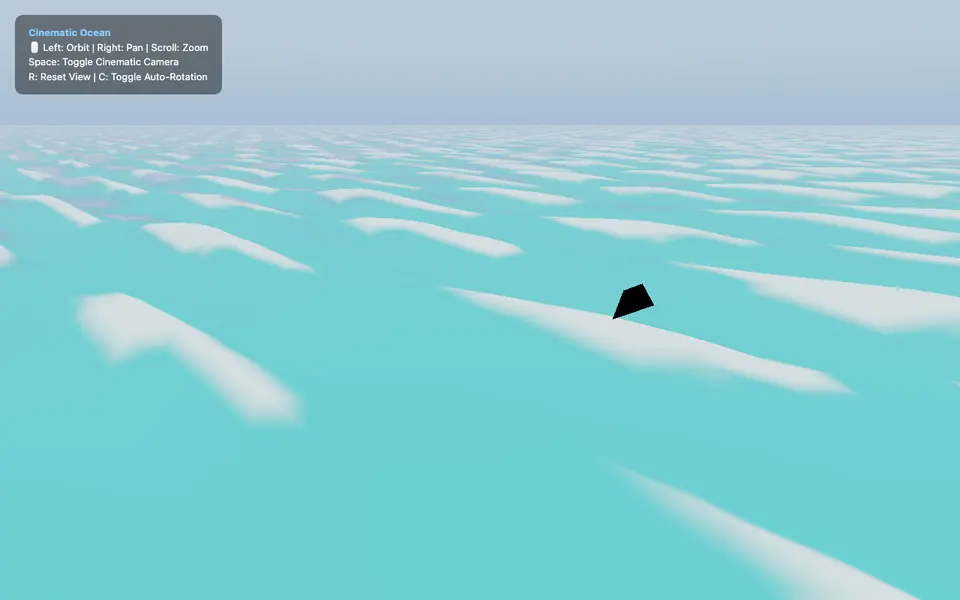

Claude Design

Bonus visual lane — it does not strictly meet the engineering asks (procedural controls, boats, the full WebGL stack). What it nails is atmosphere: dramatic horizons, color depth, lighting that actually feels cinematic. Treat as visual reference, not a benchmark winner.

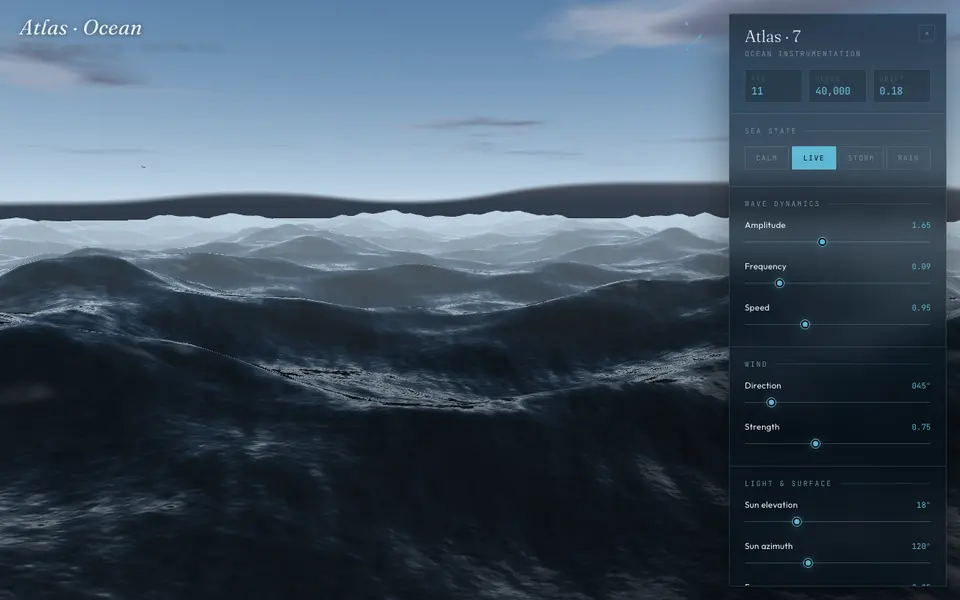

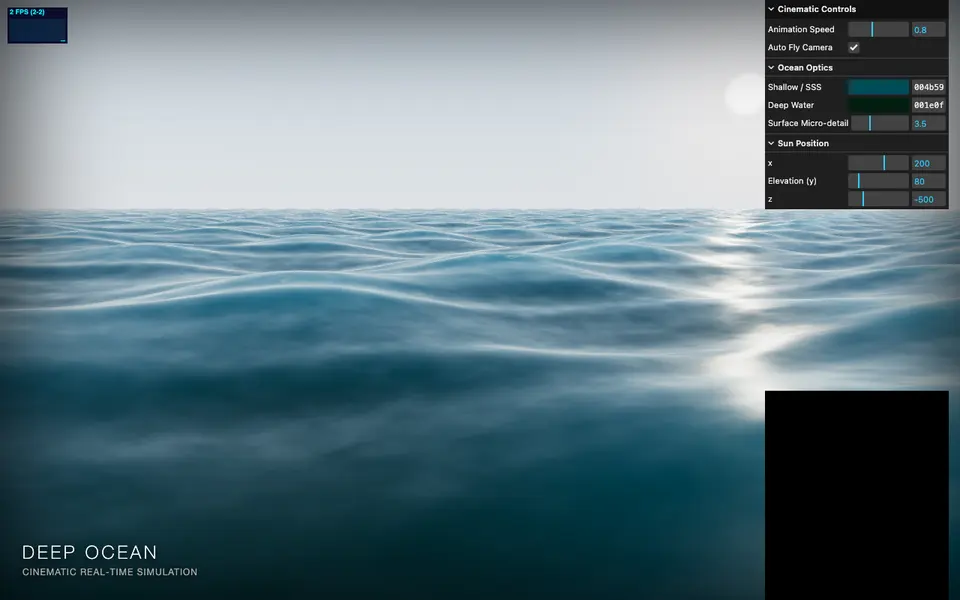

Gemini 3.1 Pro

The 3rd pass produced one of the most cinematic frames in the round — horizon, lighting and color all land. But the boats from the brief are barely visible in the final, and several engineering asks were quietly dropped.

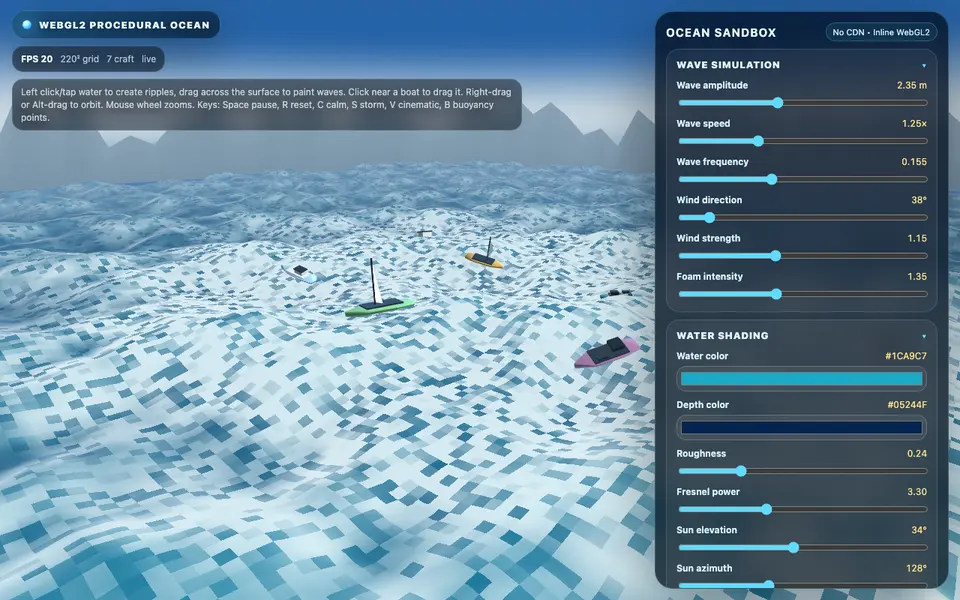

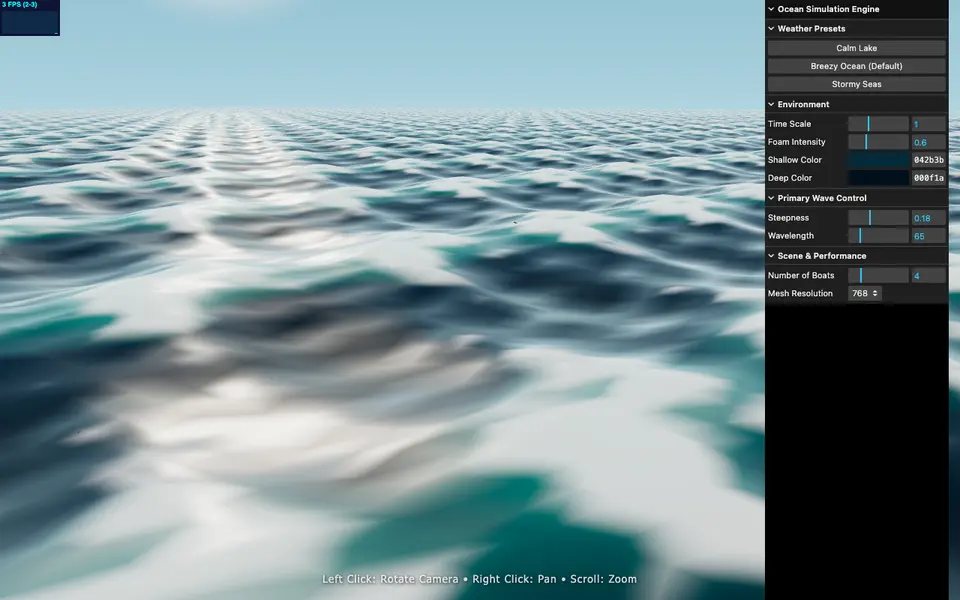

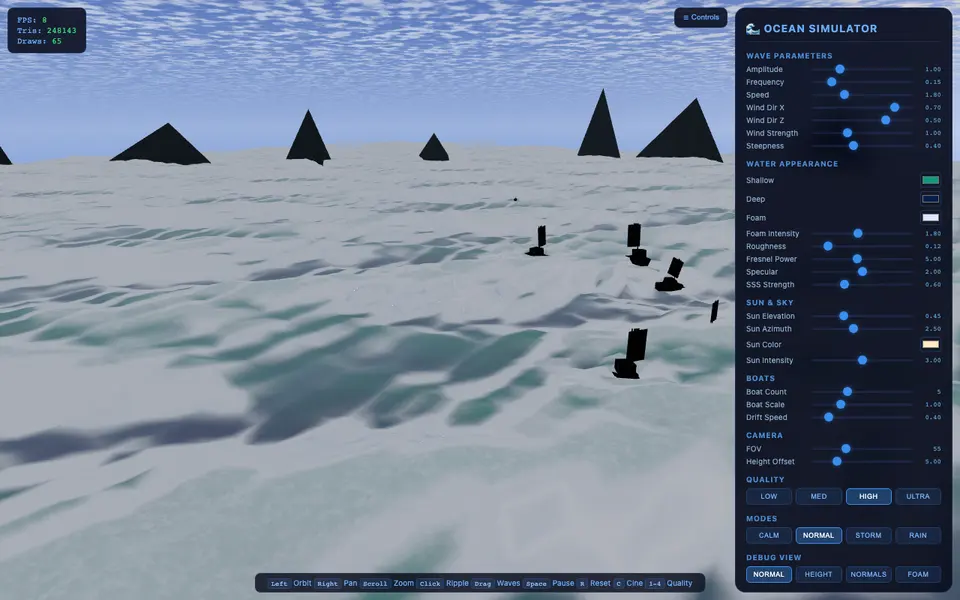

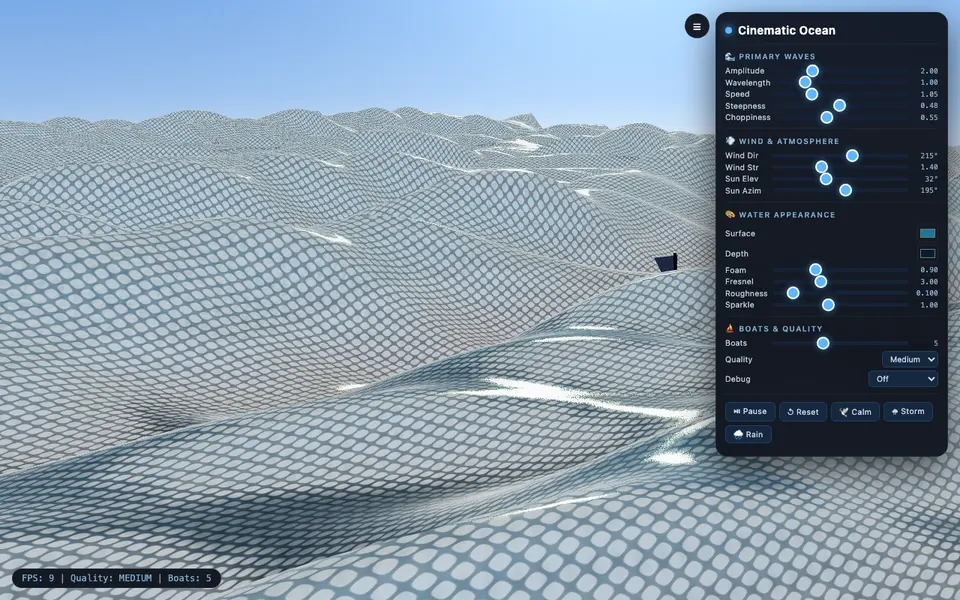

DeepSeek web Expert

Loves adding controls and systems — the panel is genuinely complete. The surface, though, kept drifting toward icy crystals or terrain rather than convincing water.

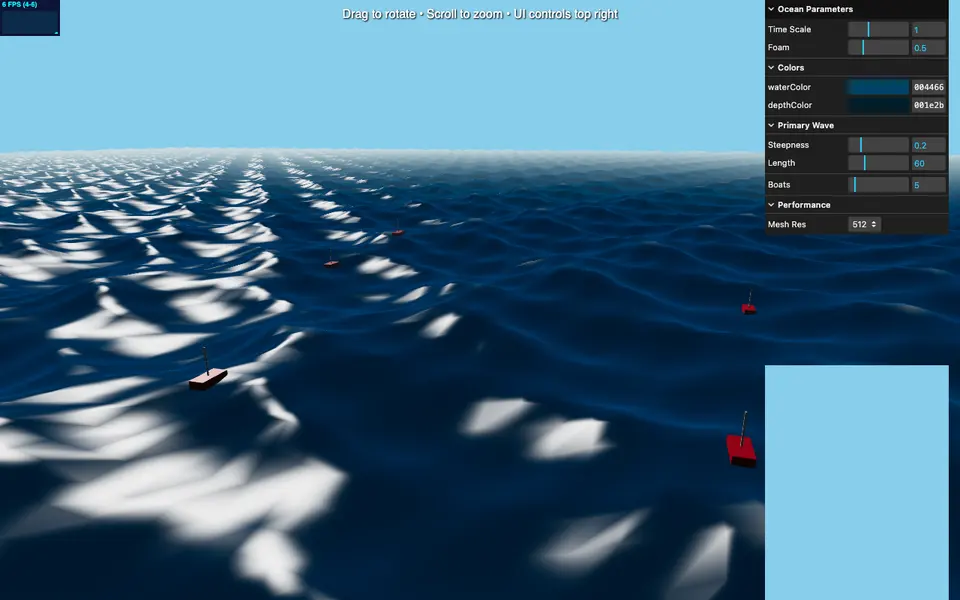

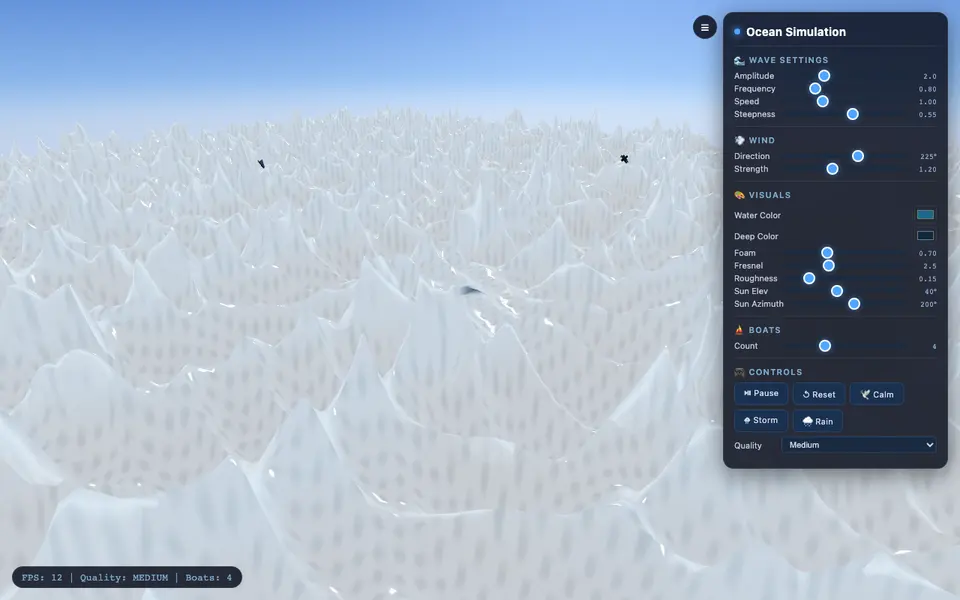

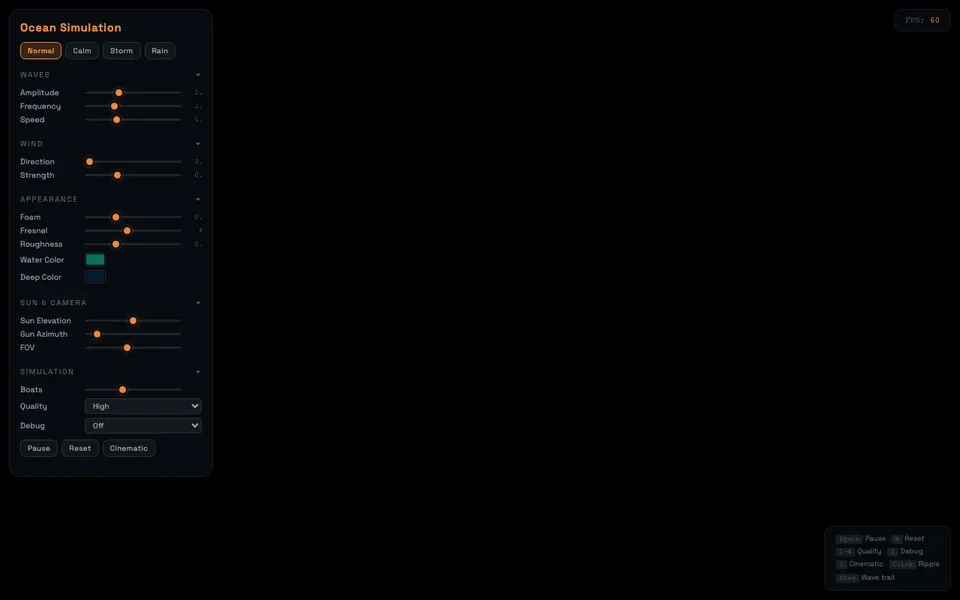

Kimi 2.6 Thinking

Pleasant and runnable, but did not push the harder shader / physics asks. Reads more like a calm lake than the high-pressure ocean shader the brief asked for.

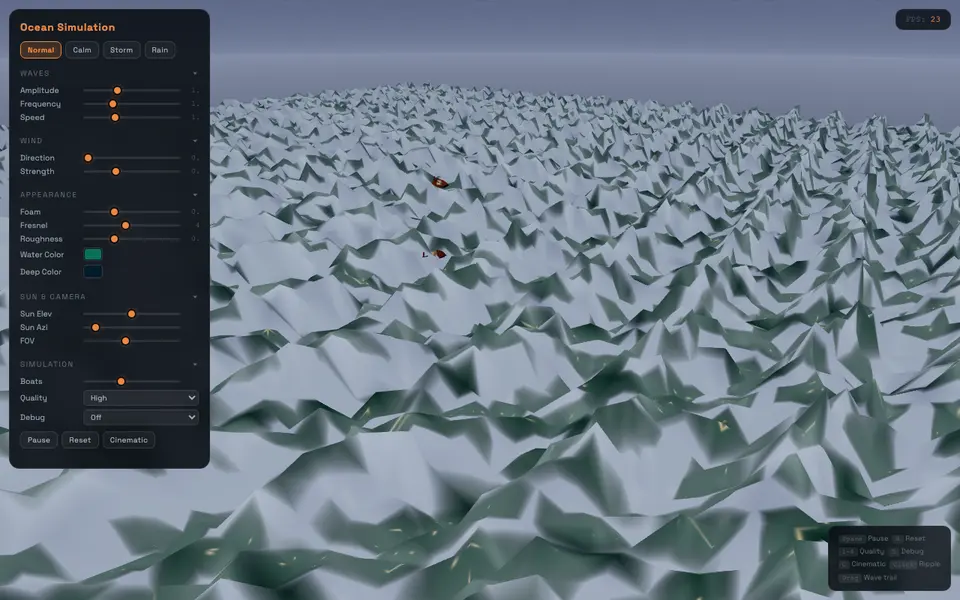

Qwen 3.6 Max Preview

First shader compile failed; the final does run, but a lot of the original prompt scope got dropped to get there. Surface still looks faceted and noisy.

GLM-5.1

First archive had no canvas at all; the second rendered but the surface reads more like jagged terrain than water. Did not reach a clean final this round.

every prompt, and how each model walked through them.

Six prompts cover everything from the original brief to the runtime fixes and the Codex CLI queue. Below the prompt list, the per-model log shows which prompts each lane actually went through.

P0Original benchmark promptbase

Every model gets this verbatim, fresh chat, no system prompt.

Create an ultra-realistic 3D ocean / water simulation system and deliver it as a single self-contained HTML file. The goal is to stress-test advanced AI capability in real-time 3D graphics, GLSL shader programming, physically inspired water simulation, interactive parameter control, and floating object physics. Core Requirement: - Build a real-time 3D water scene, not a 2D canvas ripple effect. - The final output must be a single complete `.html` file. - The file must run directly in a modern browser. - Use WebGL, GLSL shaders, and/or Three.js. - If Three.js is used, it must be loaded from a CDN inside the HTML file. - Do not use external image assets, model files, texture files, or build tools. - All geometry, materials, shaders, boats, sky, UI, and effects must be generated procedurally or inline. Rendering Requirements: - Implement a 3D water surface using a high-resolution plane mesh or procedural geometry. - The water must use custom shader logic or shader-like material behavior. - Include realistic water effects such as vertex displacement, multi-layer sine/Gerstner waves, wind-driven direction, dynamic amplitude, wave speed controls, surface normal calculation, specular highlights, Fresnel reflection, environment reflection approximation, depth-based water color, foam or whitecaps on steep waves, sun/light controls, and a sky gradient or procedural environment. - The water surface must visibly behave like a 3D ocean/lake, not a flat animated texture.

P1No-water repairno-water repair

Triggered when a first run rendered nothing or hid the water. Used on GPT-5.5 Pro, GPT-5.5 Standard, GLM-5.1.

i could not see the water.

P2Broad quality passquality

Mid-conversation nudge for everyone that already had visible water — generic, intentionally lazy.

enhance the overall quality

P3Cinematic final passcinematic

The closing prompt before final HTML capture. Anyone who reached a final ran this last.

Improve the ocean/water scene so it feels significantly more realistic, cinematic, and visually rich. The final result should look like a believable body of water rather than a flat or decorative shader. Focus on making the water feel deep, dynamic, natural, and physically convincing from multiple viewing angles. The surface should have layered motion, subtle variation, convincing highlights, realistic color depth, and a strong sense of scale. Avoid repetitive patterns, plastic-looking shine, overly uniform color, and artificial movement. Make the lighting, reflections, wave behavior, foam, horizon, and overall composition work together as a polished real-time ocean rendering demo. The goal is not just to add more effects, but to make the water feel alive, immersive, and high quality. Return the improved result as a complete, self-contained single HTML file that can run directly in the browser.

P4Concrete runtime-error fixruntime fix

Only fired when an archived HTML threw a runtime error in the browser. Used once on DeepSeek for `debugSelect is not defined`.

deepseek_html_20260501_d05add.html:1347 Uncaught ReferenceError: debugSelect is not defined at deepseek_html_20260501_d05add.html:1347:9 The error occurs because `debugSelect` is used in `cycleDebugView()` but was never declared. Fix the standalone HTML so the debug selector is defined and the demo runs directly in the browser.

P5Codex CLI queued conditioncodex queue

Only the Codex CLI lane. The CLI takes a single queued chain rather than back-and-forth turns.

Codex CLI GPT-5.5 xhigh was tested as one queued-message run in ~/Desktop/codexwater: 1. P0 original benchmark prompt 2. P2 enhance the overall quality 3. P3 final cinematic water prompt The archived result is the final HTML emitted after that queued chain, not three separately inspected intermediate HTML files.

- Claude Opus 4.7Claude webP0P2P3

P0 first pass already shipped — three iterations cleaned up details, finished on a microfacet final.

- GPT-5.5 ProChatGPT webP0P1P2P3

P0 hit a runtime error → P1 forced visibility → P2 added controls → P3 closed on a cinematic WebGL2 final.

- Codex CLI GPT-5.5 xhighCodex CLIP0P2P3

Three prompts queued in one go (P0 → P2 → P3). No back-and-forth, no intermediate captures.

- GPT-5.5 Thinking StandardChatGPT webP0P1P2P3

P0 failed off-camera → archive starts at the P1 recovery → P2 / P3 polished. One typo-archived module-import block.

- Claude DesignClaude Design bonusP0P3

Treat as visual reference — Claude Design is tuned for art direction, not the engineering brief.

- Gemini 3.1 ProGemini webP0P2P3

Three sequential passes, no failures — strongest visual jump on P3, weakest spec adherence of the visible-water lanes.

- DeepSeek web ExpertDeepSeek webP0P2P4P3

P0 → P2 polish → P4 fixed an undeclared `debugSelect` runtime error → P3 final.

- Kimi 2.6 ThinkingKimi webP0P3

P0 → P3, no failures. Skipped the harder shader asks rather than tried and broken.

- Qwen 3.6 Max PreviewQwen webP0P1P3

P0 shader compile failed → P1 unblocked rendering → P3 simplified final.

- GLM-5.1Z.ai webP0P1

P0 produced no canvas → P1 unblocked rendering — never reached a clean final in this round.

Three prompts queued in one go (P0 → P2 → P3) and exactly one final HTML came back. Read it as multi-prompt execution, not first-shot fidelity.

who won what, without drama.

Short summaries by category. Different rounds and different prompts will rearrange these — see the caveats section.

Read the prompt the most carefully and translated it into actual shader, physics and camera decisions. The microfacet final still looks like real water after multiple passes.

Strictest about the spec. First pass tripped a runtime error, but it locked back onto the visible-water requirement and rebuilt as a self-contained WebGL2 demo. Final ships with clear UI, boats and believable horizon haze.

The 3rd pass produced one of the most cinematic frames of the round. Caveat: the boats from the spec are barely visible and several engineering asks were quietly dropped.

Bonus visual lane. Does not strictly meet the engineering brief, but the atmosphere — horizon, color depth, lighting — actually feels cinematic. Treat as visual reference, not a benchmark winner.

Both shipped feature-rich panels (controls, buoyancy, wakes for Codex; heavy systems for DeepSeek). DeepSeek's water reads icy though — full UI doesn't guarantee convincing visuals.

GLM rendered no canvas; Qwen failed shader compile; GPT-5.5 Pro tripped a runtime error. Two of the three got there with a follow-up; GLM did not reach a clean final.

A single desktop, a single round, one prompt set. Different sessions, different timings, different prompt phrasings — any of those can absolutely flip an order. Treat it as a snapshot.

how I scored it.

Each model gets a 1–10 score on four axes. Visuals are not the whole story — prompt fidelity counts the same.

Did it actually meet the hard asks — single HTML, WebGL, procedural geometry, working controls, visible boats? Engineering compliance, not interpretation.

Water realism, lighting, color depth, atmospheric haze, composition — does the scene feel beautiful and convincing in the browser?

Control panel design, parameter sliders, interactivity, frame-rate, ease of use. The bits beyond just rendering pretty water.

First-frame water visible, no runtime errors, no manual intervention needed to get a working artifact.

ChatGPT web — both GPT-5.5 Pro and GPT-5.5 Thinking Standard.

Codex CLI in ~/Desktop/codexwater. The only command-line lane — it runs a queued P0 → P2 → P3 chain rather than chatting back and forth.

Claude.ai web on Opus 4.7 Adaptive, plus a few bonus runs from Claude Design for visual reference.

Gemini web — three sequential HTML iterations archived.

Z.ai web. The first run produced a blank canvas; the second one rendered.

Qwen Studio. First shader failed to compile, then a simplified final.

DeepSeek web Expert mode — first pass through PBR repair after a runtime error.

Kimi Thinking — two ocean stages archived.

this is one snapshot, not a leaderboard.

Subjective evaluation, one desktop, one round of testing. Read it that way.

Most lanes ran on each vendor's web UI — GPT, Claude, Gemini, DeepSeek, Kimi, Qwen, GLM. The only command-line lane is Codex CLI GPT-5.5 xhigh, and it is flagged separately on its model card and prompt log.

Different prompt phrasings, different chat sessions, different run timings can change the order. The point of this page is to make every prompt and every HTML public so you can re-run it yourself.

Read the scores as one person's qualitative read, not a peer-reviewed benchmark. If a result feels off for your use case, it probably is — your use case is different.